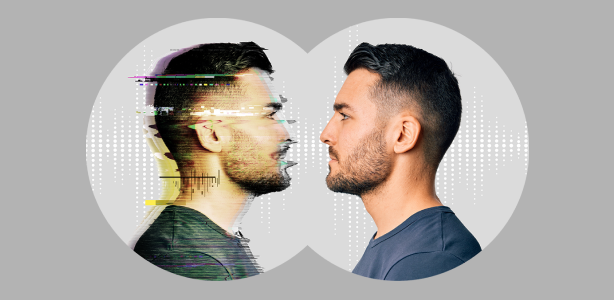

AI voice cloning pranks are rapidly spreading across WhatsApp groups in India, raising questions about privacy and misuse. What started as harmless fun is now blurring the line between entertainment and potential digital fraud risks.

AI voice cloning pranks have become a growing trend on WhatsApp across India, where users mimic friends, bosses, or family members using artificial intelligence tools. While many clips are shared for laughs, the scale and realism of these audios are triggering serious concerns among users and experts.

What Are AI Voice Cloning Pranks and How They Work

AI voice cloning refers to the use of machine learning tools that can replicate a person’s voice using short audio samples. Several apps and web tools now allow users to upload voice clips and generate new speech in the same tone and style.

In WhatsApp groups, users are using these tools to create prank audios. Common formats include fake instructions from a boss, a friend asking for money, or a family member saying something unusual. The realism is often convincing enough to confuse listeners, especially when shared without context.

The barrier to entry is low. Many tools require just a few seconds of recorded audio, making it easy for anyone with basic technical knowledge to participate in the trend.

Why the Trend Is Going Viral Across India

The virality of AI voice cloning pranks is driven by three factors. First is novelty. For many users, hearing a familiar voice say something unexpected creates instant engagement. Second is shareability. WhatsApp remains one of the most widely used messaging platforms in India, making distribution effortless.

Third is cultural behavior. Indian WhatsApp groups often function as informal entertainment hubs where jokes, memes, and videos circulate rapidly. AI-generated pranks fit seamlessly into this ecosystem.

Tier-2 and Tier-3 cities are also contributing significantly to this trend. With increasing smartphone penetration and cheaper data plans, users outside metro cities are actively creating and sharing such content.

When Humor Turns Into Risk: Deepfake and Scam Concerns

The biggest concern around AI voice cloning is misuse. While many pranks are harmless, the same technology can be used for fraud. There have been global cases where cloned voices were used to impersonate executives or relatives to request urgent money transfers.

In India, similar risks exist. A cloned voice message asking for financial help could easily mislead someone, especially older users who may not be aware of such technologies. This creates a new category of digital scam that is harder to detect than traditional text-based fraud.

Cybersecurity experts have repeatedly highlighted that voice should no longer be treated as a reliable identity marker. The rise of deepfake audio is forcing a rethink on digital trust.

Legal and Privacy Implications in India

India currently does not have a specific law targeting AI voice cloning. However, misuse can fall under existing provisions related to impersonation, fraud, and identity theft under the Information Technology Act and Indian Penal Code.

Consent is another grey area. Most people whose voices are being cloned are unaware that their audio clips are being used. This raises questions about privacy rights and ethical boundaries.

As AI tools become more accessible, regulatory discussions are expected to intensify. Policymakers may need to define clearer rules around consent, data usage, and liability.

How Users Can Stay Safe on WhatsApp

Users need to adopt a more cautious approach when dealing with unexpected voice messages. Verification is critical. If a message requests money or sensitive information, it should be confirmed through a direct call or another trusted channel.

Avoid sharing personal voice recordings publicly, especially on open platforms. Even short clips can be used for cloning. Users should also stay updated on emerging digital threats to avoid falling victim to scams.

Awareness is currently the strongest defense. As the technology evolves, user behavior will play a key role in minimizing risks.

Takeaways

• AI voice cloning pranks are spreading quickly due to ease of use and high shareability

• The trend is popular across both metro and Tier-2 audiences in India

• The same technology can be misused for scams and impersonation fraud

• User awareness and verification are essential to stay safe

FAQs

Q1. What is AI voice cloning?

AI voice cloning is a technology that replicates a person’s voice using artificial intelligence and short audio samples.

Q2. Are AI voice cloning pranks illegal in India?

Pranks themselves are not illegal, but misuse for fraud or impersonation can lead to legal action under existing laws.

Q3. How can I identify a fake voice message?

Look for unusual requests, urgency, or out-of-character statements and verify directly with the person.

Q4. Is it safe to share my voice online?

Sharing voice clips publicly can increase the risk of misuse, so it is advisable to limit exposure.

Leave a Reply